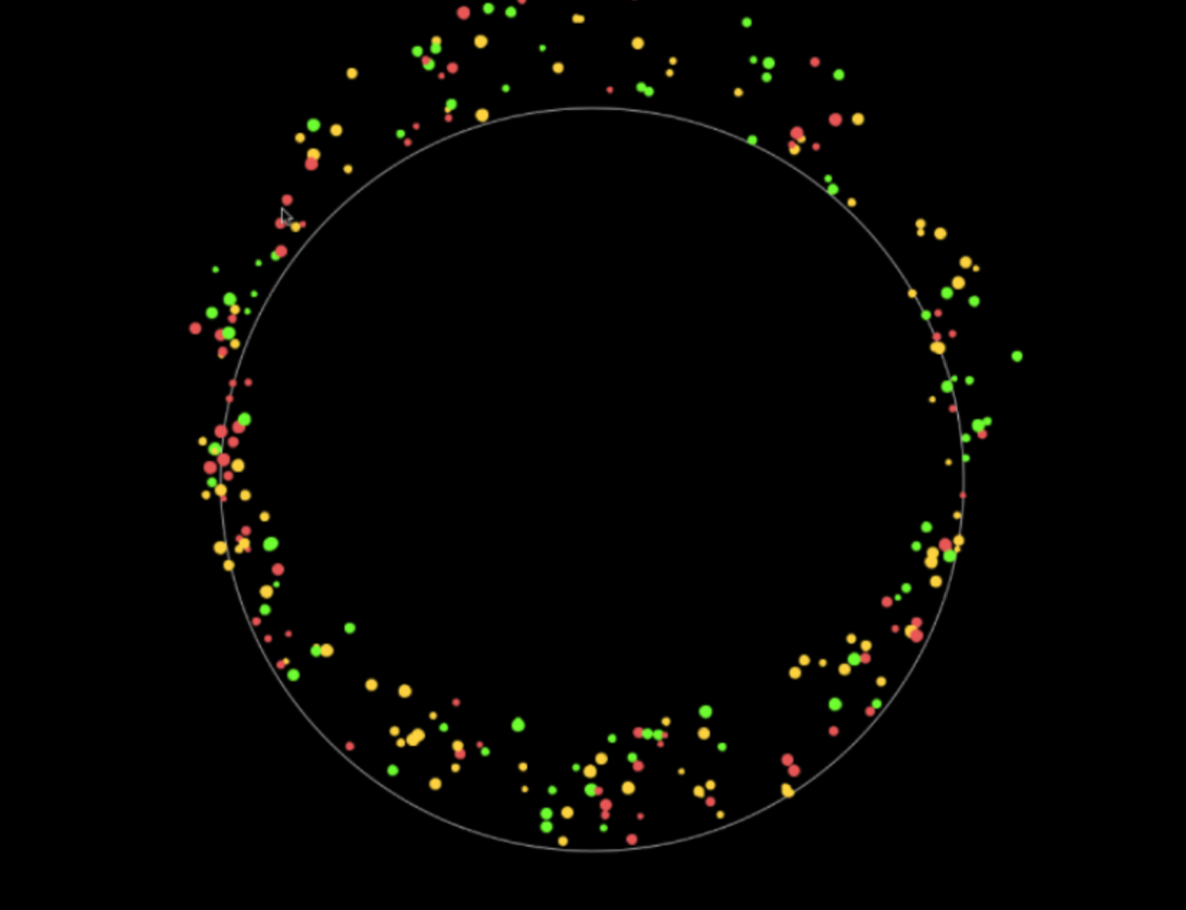

TactEye is a ubiquitous sensory solution for social gatherings that measures and expresses tactile, audio, motion, and light input to create a computational art display representing social collective experience.

Current audio and visual mediums do not fully capture the multi- dimensional nature of human social interaction.

Although mediums like cell phones and cameras are capable of simple recording and playback, they do not take advantage of other rich multi-sensory inputs a social environment may contain, such as touch, sound and light. This leads us to question, how can we better capture social experiences and move forward from pure audio-visual inputs to multi-sensory artifacts of memory? How can we condense multi- sensory data into an abstract representation? Does interacting with this instantaneous representation enrich social interactions?

TactEye’s customizable structure uses a combination of touch, light, distance, and sound sensors to collect data about its surround- ings and interactions, and creates a computational art representa- tion of these events. To read more about the design & functionalities of TactEye, please go to:

or contact Egem Yorulmaz directly at ey2300@columbia.edu